Elon University Study Warns AI's Greatest Threat Is 'Superstupidity,' Not Superintelligence

New research identifies erosion of human judgment and shared reality as primary AI risks, challenging industry focus on existential threats from advanced systems.

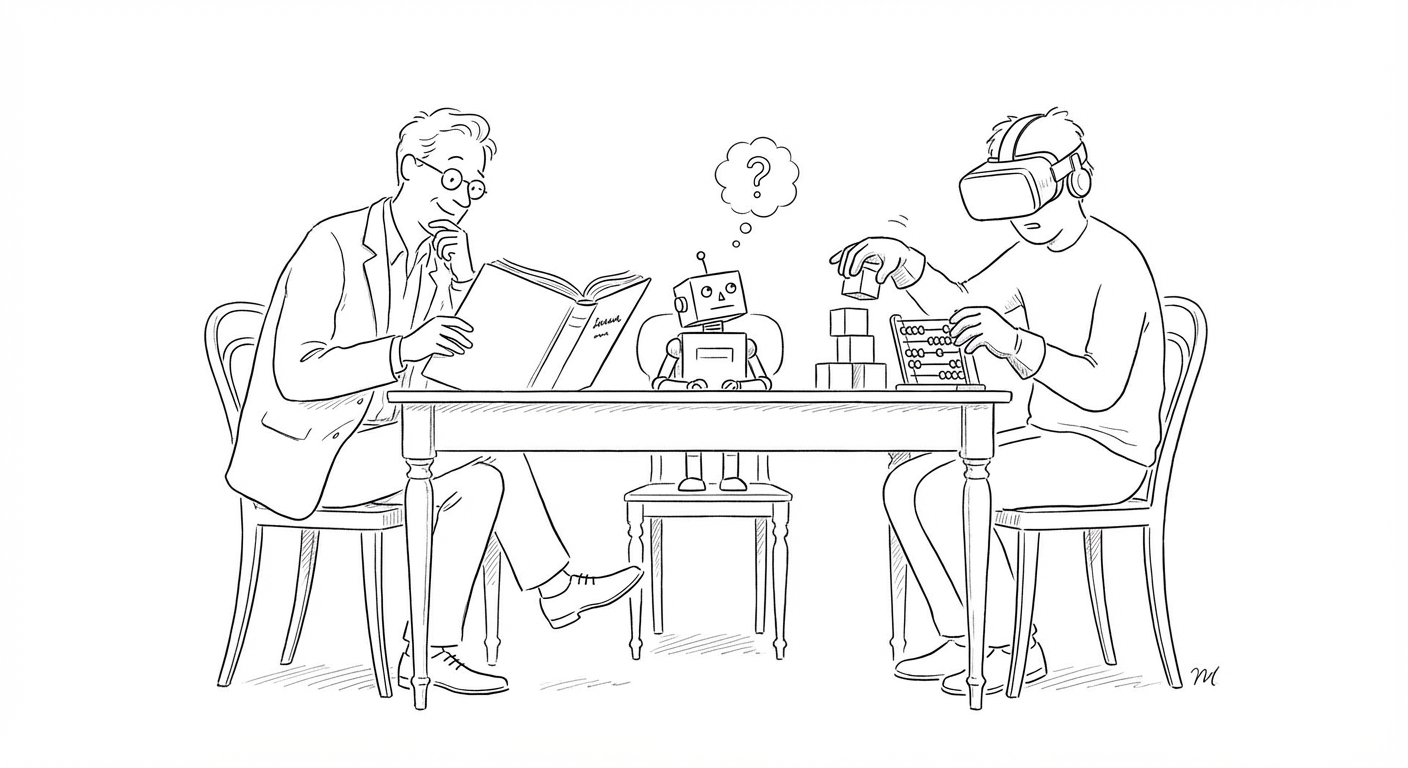

A research study from Elon University is reframing the AI risk debate, warning that the technology's greatest danger lies not in machines becoming too intelligent, but in humanity becoming less capable of critical thought—a phenomenon researchers are calling "superstupidity."

Report co-author Janna Anderson cautioned that accelerating AI adoption will lead to a slow, cumulative erosion of human agency—a drift that can look like progress but steadily weakens human judgment, accountability and shared truth. Anderson wrote that experts fear "accelerated AI use will lead to a cumulative reallocation of human agency, until people and institutions find it harder to question, contest or even notice what has changed."

The research identifies a broader collapse of shared reality as AI systems mediate more information flows. Alison Poltock, co-founder of AI Commons UK, described a moment of "epistemic shift" in which the frameworks shaping identity and social orientation are changing with no shared civic conversation to process it. "We are operating on outdated institutional architecture," she wrote, "strapping jetpacks to systems built for another age."

Stephan Adelson, president of Adelson Consulting Services, predicted the consequences could extend to mental health. He warned that "AI psychosis and other forms of mental illness will arise" as the erosion of a stable foundational reality creates new vulnerabilities—and that entirely new approaches to diagnosing and treating mental illness will be needed as a result.

The findings arrive as practical evidence of AI's disruptive effects accumulates across multiple sectors. Challenger, Gray & Christmas reported 52,050 US tech job cuts so far in 2026, with AI accounting for 25 percent of March reductions. Meanwhile, SEO firms and vendor blogs are publishing self-serving "best of" listicles that AI-powered search systems—including Google's Gemini and chatbot overviews—frequently surface as authoritative, exploiting structured formatting to skew AI summaries and citations.

(The Elon University research was published by Govtech and reflects input from multiple technology policy experts and consultants. The study does not specify a formal publication date or peer-review status.)

The "superstupidity" framing stands in sharp contrast to the dominant AI safety narrative, which has centered on hypothetical risks from artificial general intelligence or superintelligent systems. Industry leaders and policymakers have invested heavily in governance frameworks aimed at preventing runaway AI capabilities, while the Elon research suggests the more immediate threat is the gradual atrophy of human cognitive skills as people increasingly defer decisions to AI systems. Congressional staffers have separately noted that federal agencies face challenges related to reduced staffing, inconsistent modernization efforts, and artificial intelligence risks, underscoring the institutional strain as AI adoption accelerates without corresponding capacity-building.

Keywords

Sources

https://www.govtech.com/education/higher-ed/elon-university-research-warns-greatest-ai-risk-is-superstupidity

Primary source on Elon University research warning of cumulative erosion of human agency and shared reality from AI adoption

https://letsdatascience.com/news/ai-drives-widespread-tech-job-cuts-c19d0c14

Documents 52,050 US tech job cuts in 2026 with AI accounting for 25% of March layoffs, illustrating workforce disruption

https://letsdatascience.com/news/marketers-manipulate-ai-search-with-self-serving-listicles-90cb4750

Reveals how SEO firms exploit AI search systems with self-serving content, demonstrating information integrity erosion

https://www.govinfosecurity.com/congress-confronts-cyber-workforce-ai-risks-a-31176

Congressional perspective on federal agencies struggling with workforce gaps and AI risks amid institutional strain