AI Bug Detection Surpasses Human Researchers as Open-Source Projects See Quality Surge

Anthropic's Opus 4.5 triggered a shift in vulnerability reporting quality, with cURL fixing more flaws in three months than in prior full years.

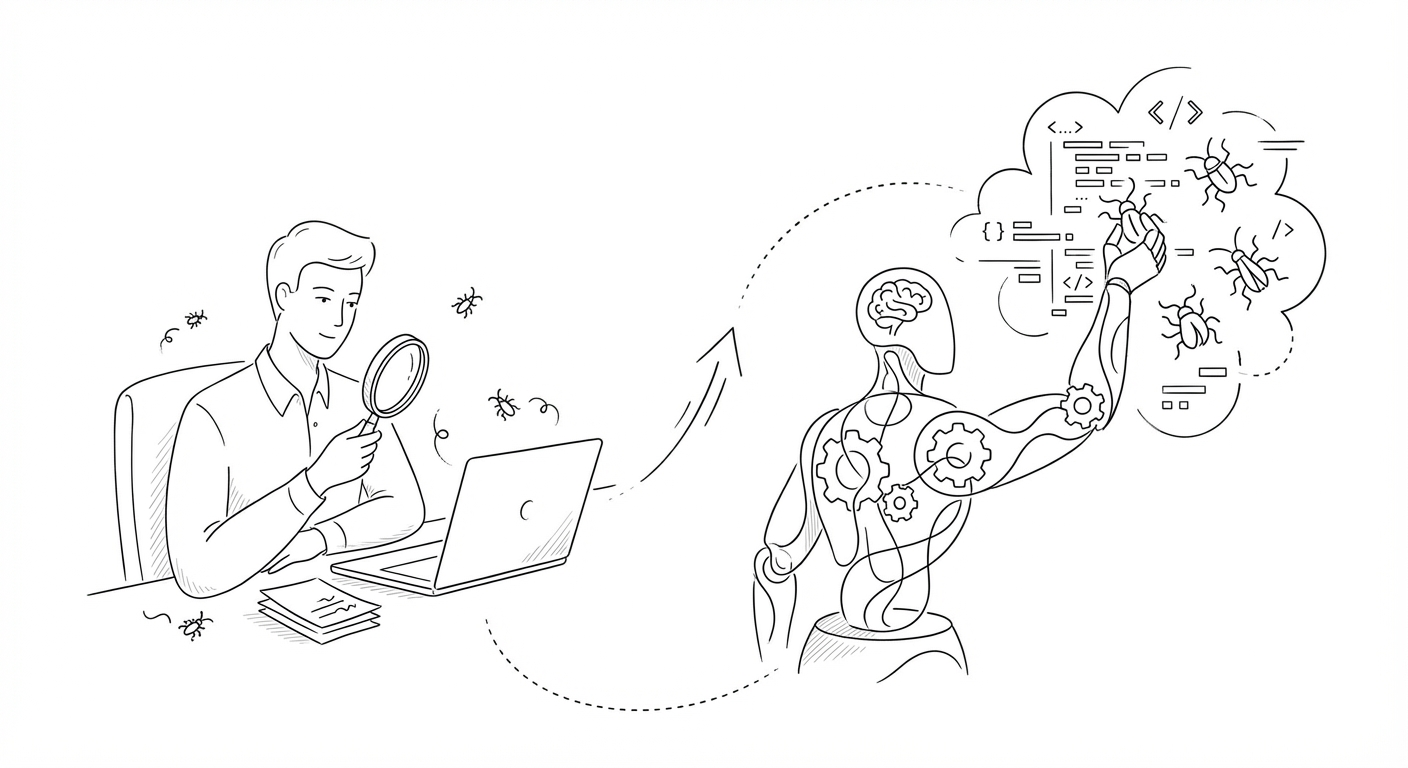

Artificial intelligence models have crossed a threshold in software security research, now outperforming human experts at identifying bugs and vulnerabilities in widely deployed code, according to security leaders and open-source maintainers tracking the shift in early 2026.

The change became visible following the November release of Anthropic's Opus 4.5 model, which coincided with a dramatic improvement in the quality of security reports submitted to open-source projects. Daniel Stenberg, lead developer of cURL—a 30-year-old data transfer tool embedded in cars, medical devices, and countless internet-connected systems—said his team has identified and patched more vulnerabilities in the first three months of 2026 than in each of the previous two years combined.

"LLMs have now bypassed human capability for bug finding," said Alex Stamos, chief security officer at Corridor and former head of security at Yahoo and Facebook. The volume of reports submitted to projects like cURL has remained high, but the proportion of low-quality submissions has collapsed. Stenberg estimates that nearly all poor-quality reports have disappeared, with roughly one in ten flagged issues now representing genuine security vulnerabilities and the remainder identifying legitimate bugs.

Stenberg himself has adopted AI tools for internal code review, discovering over 100 bugs with a single query in code that had already passed multiple rounds of human inspection and traditional static analysis. He described the results as "almost magical."

The implications extend beyond individual projects. Because commercial software relies heavily on open-source components, improvements in the security posture of foundational libraries like cURL ripple across the broader internet ecosystem, Stamos noted.

(Anthropic has separately warned that its newest models present "unprecedented cybersecurity risks," though the company has not publicly detailed the nature of those concerns. The firm is also reportedly planning a $200 million private equity venture to sell AI tools to portfolio companies, and recently hired Microsoft's Eric Boyd to lead infrastructure.)

The shift in bug-finding capability arrives as AI companies face mounting scrutiny over model safety and dual-use risks. Anthropic's public warnings about cybersecurity threats contrast with the demonstrable security benefits now visible in open-source maintenance workflows, illustrating the dual-edged nature of advanced language models in the hands of both defenders and potential adversaries.

Keywords

Sources

https://www.npr.org/2026/04/11/nx-s1-5778508/anthropic-project-glasswing-ai-cybersecurity-mythos-preview

Detailed account of cURL maintainer's experience with AI-driven bug reports and quality shift following Opus 4.5 release

https://www.platformer.news/anthropic-mythos-cybersecurity-risk-experts/

Corporate context on Anthropic's $200 million PE venture and infrastructure hiring amid broader AI industry developments

https://gizmodo.com/anthropics-new-model-is-so-scarily-powerful-it-wont-be-released-anthropic-says-2000743234

Emphasis on Anthropic's warnings about unprecedented cybersecurity risks posed by its newest models

https://letsdatascience.com/news/marketers-manipulate-ai-search-with-self-serving-listicles-90cb4750

Broader context on AI manipulation concerns and breach-ready security posture across society